Whitepaper · ~15 min read

The Interpretation of Business Questions

How Spotonix thinks like your best analyst: a knowledge graph approach to composable, explainable business intelligence.

Harish Butani

Co-founder & CTO

Venkatesh Seetharam

Co-founder & CEO

Self-Service vs. Semantic Richness

Business users need fast, self-service access to insights about performance and planning, but existing solutions force an unacceptable tradeoff: traditional BI tools offer semantic precision but require gatekeepers and long turnaround times, while text-to-SQL solutions provide self-service but lack semantic richness and trustworthiness.

The BI Reporting Landscape

Until recently, business questions were answered through two primary paths: direct interaction with pre-built BI artifacts (dashboards, reports, notebooks), or through a BI analyst intermediary who would either provide an existing artifact or develop a new one on demand.

The Direct Path: Business users navigate existing dashboards and reports, filtering and slicing pre-defined metrics and dimensions. This approach offers immediate access but is constrained by what has been pre-built — users are limited to anticipated questions and cannot easily explore novel analyses.

The Analyst-Mediated Path: For questions that existing artifacts cannot answer, users engage a BI analyst who interprets the request, determines the appropriate data sources and calculations, and produces a new report, dashboard, or notebook. This path enables arbitrary complexity but introduces significant latency (days to weeks) and creates bottlenecks as organizations scale.

The Agentic Approach: The emergence of large language models has enabled a new approach: conversational agents that interpret natural language questions and generate SQL on demand. This promises self-service flexibility with rapid turnaround, eliminating both the pre-built artifact constraint and the human bottleneck.

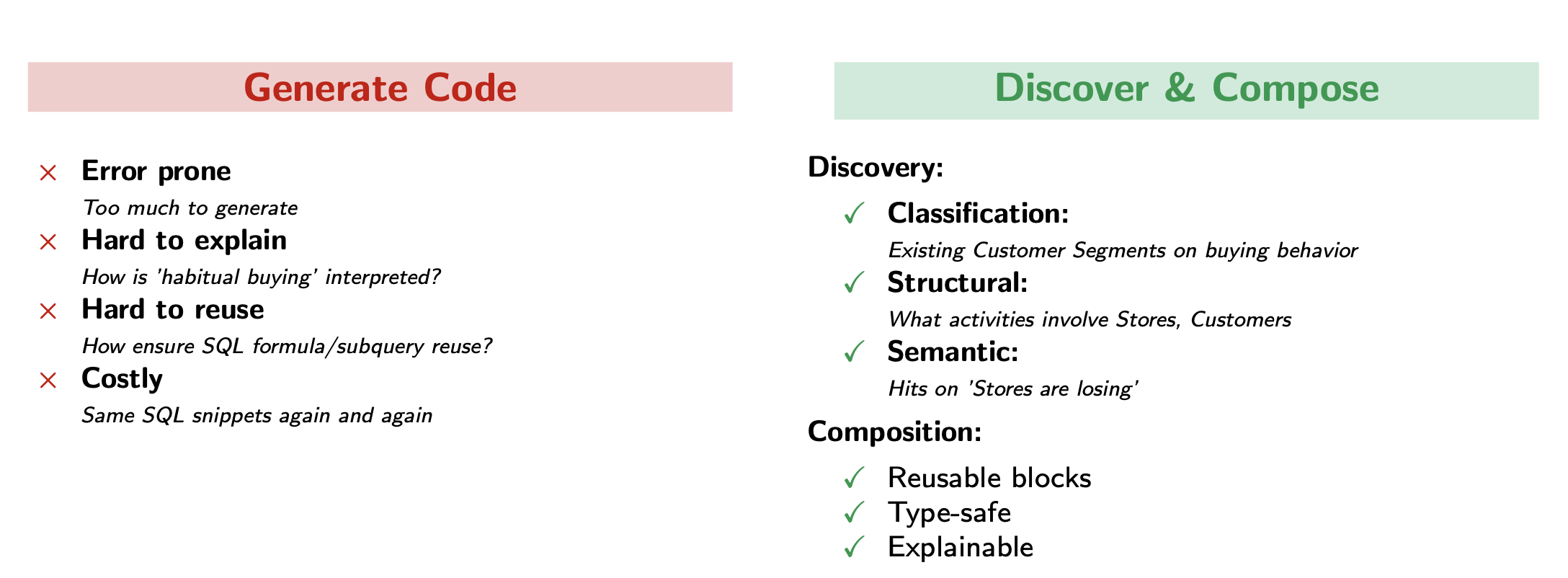

Why Text-to-SQL Agents Fall Short

While text-to-SQL agents promise self-service flexibility with rapid turnaround, they suffer from three fundamental limitations:

Semantic Gap. SQL operates at the lowest semantic level — generating table joins, subqueries, window functions, and filters from business questions. For anything beyond simple slice-and-dice queries, it's extremely difficult to see the connection between the generated SQL and the original business question. The business logic gets buried in WHERE clauses and CTEs that are nearly impossible to validate.

Missing Composability. Text-to-SQL agents regenerate logic from scratch for each question, creating "islands of semantics" with no connection to related analyses. There's no way to discover that "habitual buying customers" was already defined, or to reuse "Year-to-Date" calculation patterns across contexts.

Black Box Reasoning. The LLM-based generation process is opaque. Business users cannot validate whether the query correctly captures their intent without understanding both SQL and the data model. The agent cannot explain why it chose specific joins, how it interpreted business terms, or what assumptions it made.

Read the full whitepaper

Enter your email to unlock the remaining sections — how expert analysts think, the knowledge graph approach, analysis patterns, and the interpretation loop.

No spam. We'll only reach out if it's relevant.

How Expert Analysts Think

To understand what's needed for AI-powered BI interpretation, we must first understand how expert analysts actually work. They don't think in terms of SQL generation — they think compositionally and categorically.

When faced with a new question like "Which stores are losing habitual buying customers?", an analyst's mental process involves:

- Discovery: "Have we analyzed customer behavior patterns before? How was 'habitual buying' defined?"

- Pattern matching: "This is a change-over-time analysis with customer segmentation."

- Composition: "I can reuse the 'Habitual Customers' definition and combine it with store-level trending."

Analysts don't start from scratch. They identify what's similar to previous work, then transform or compose existing building blocks. They might swap "Sales" for "Returns," change "Year-over-Year" to "Month-over-Month," or reuse a customer segment in a different context. This compositional thinking enables rapid analysis, consistency, and explainability.

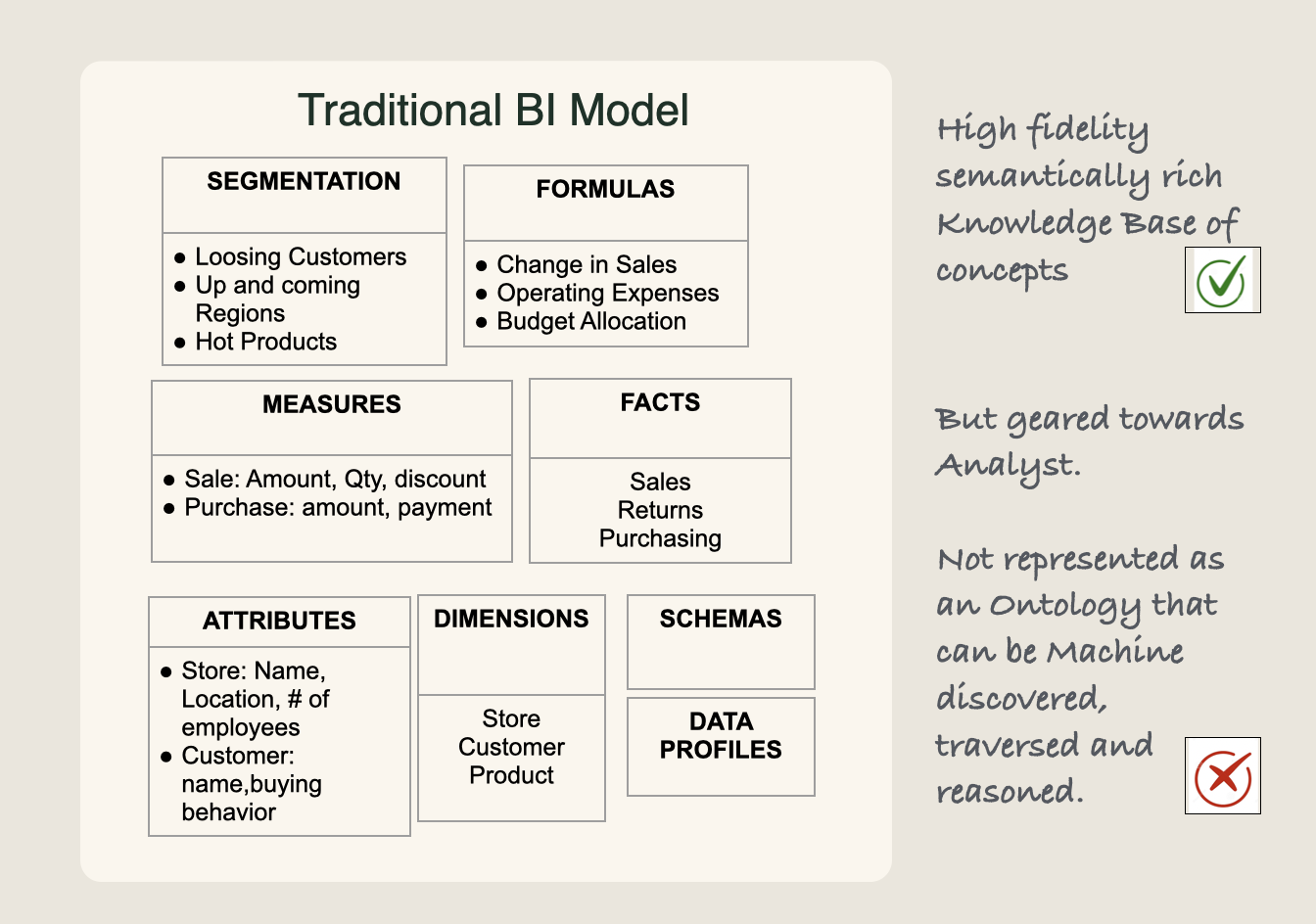

The Problem with BI Catalogs

Traditional BI platforms (Power BI, Tableau, Looker) maintain semantically rich catalogs — dimensions, metrics, hierarchies, and business logic. However, they're optimized for human analysts, not AI agents. When analysts need to discover relevant analyses, they rely on out-of-band discovery: discussions with peers, wikis, Slack channels, and tribal knowledge.

This works for humans who can interpret documentation and make intuitive leaps. But it doesn't scale to AI agents — they need machine-discoverable, traversable, structured knowledge.

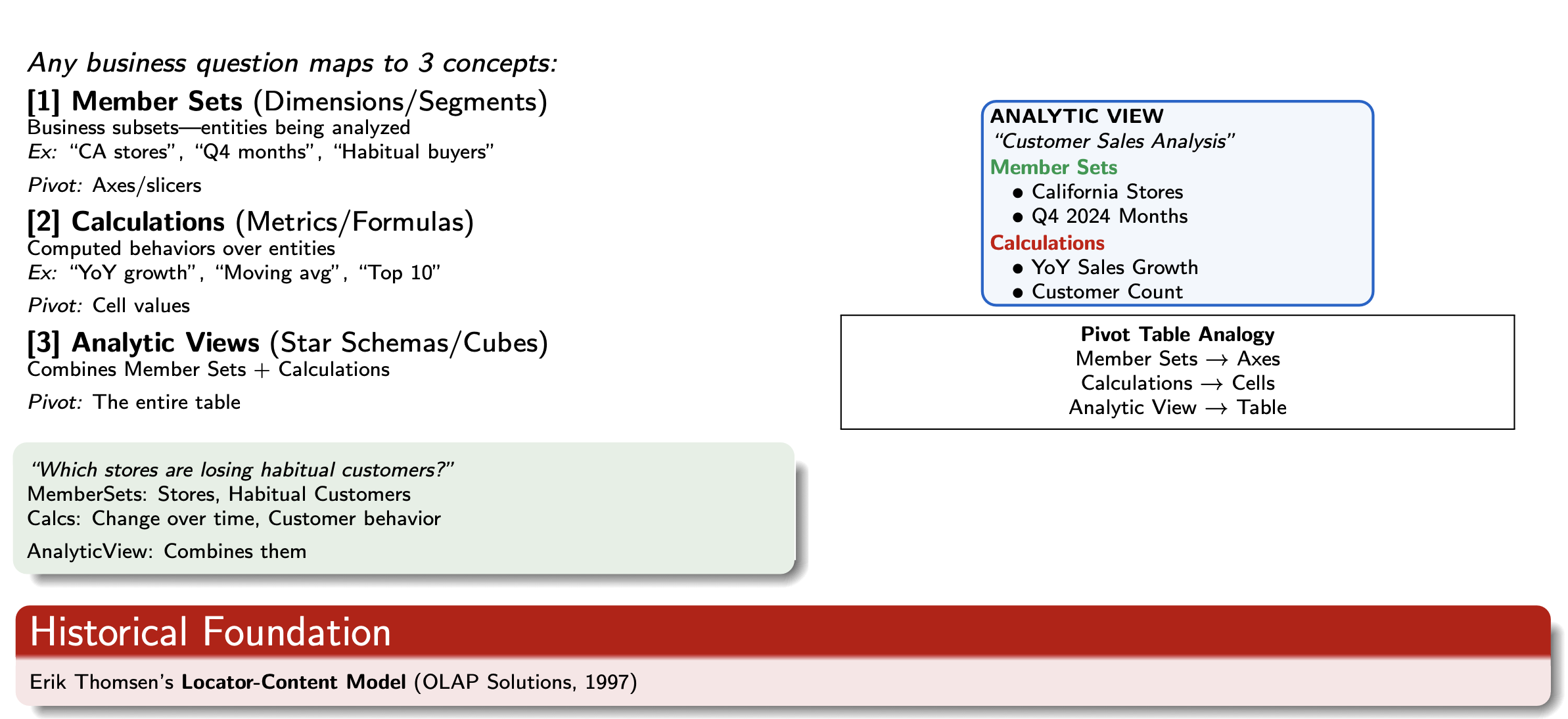

Three Fundamental Concepts

Any business question, regardless of complexity, maps to three fundamental concepts:

Segmentations — Business entity collections that define who, what, when, where we're analyzing. Examples: "California Stores," "Q4 2024 Months," "Habitual Buying Customers," "Top 10 Products."

Calculations — Business logic that defines how much, how many, how different we're measuring. Examples: "Year-over-Year Sales Growth," "Customer Lifetime Value," "% Contribution."

Business Analyses — Combinations of Segmentations with Calculations that answer complete business questions. The full analysis.

These concepts reference each other, forming a compositional graph. Segmentations can reference Calculations ("Top Customers by Sales"). Calculations can reference other Calculations ("Gross Margin = Sales - Cost"). Business Analyses combine both into complete answers. This compositional structure enables arbitrarily complex analyses while preserving explainability.

The Knowledge Graph Approach

By representing Segmentations, Calculations, and Business Analyses as richly connected nodes in a knowledge graph, we transform tribal knowledge into systematic, machine-discoverable patterns.

In this knowledge graph, AI agents can:

- Search by semantics — find "habitual customers" through embedding similarity

- Search by structure — query "Growth calculations where input is Sales"

- Search by pattern — discover all "Customer segmentations based on ranking"

- Compose algebraically — transform analyses by swapping components

- Explain reasoning — trace back through the composition chain

This is fundamentally different from text-to-SQL. Instead of generating low-level code from scratch every time, the system discovers what's already known, composes from reusable building blocks, validates the interpretation in business terms, and learns — capturing the reasoning for next time.

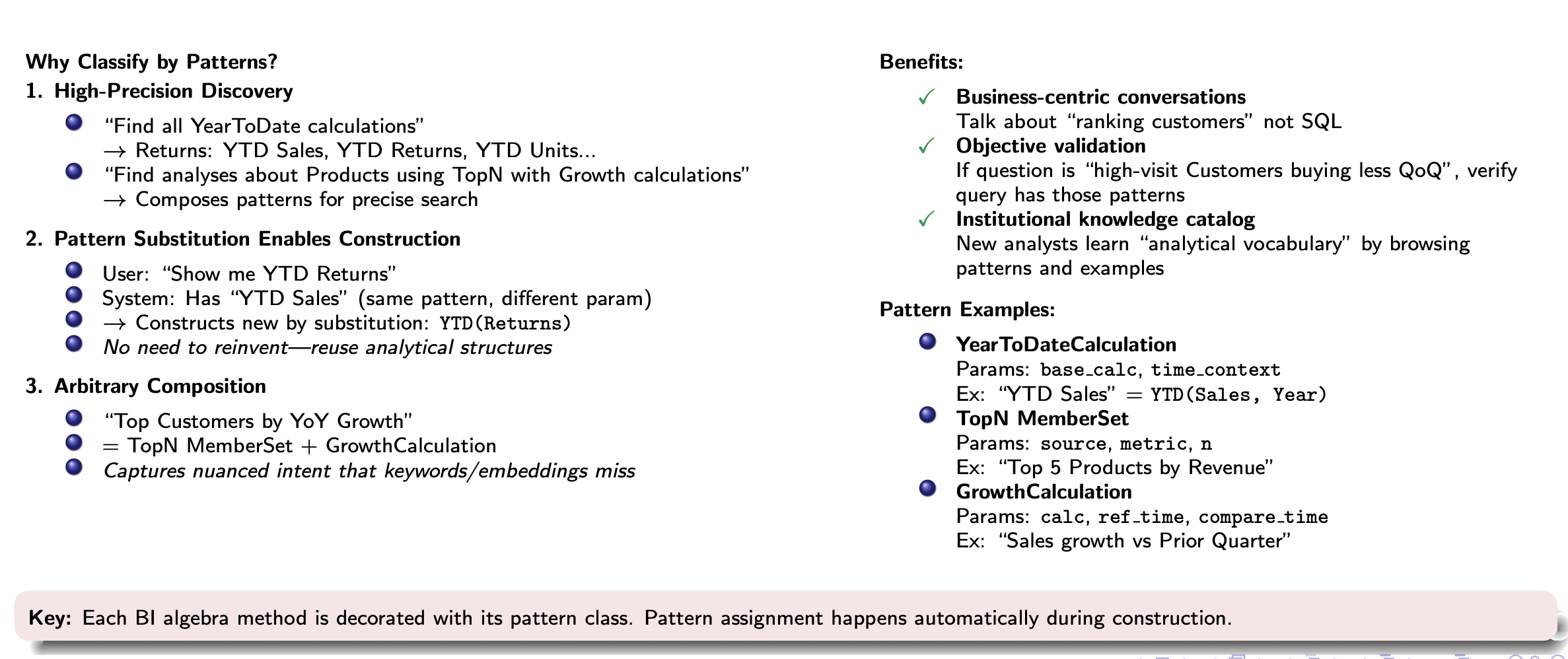

Analysis Patterns

Analyst teams at enterprises handle huge numbers of datasets and perform multitudes of analyses. For a new AI agent (or human analyst), grokking such a large catalog is daunting. How do expert analysts tackle new business questions? They associate calculation and segmentation features with the question, then find past analyses with these features.

We formalize this technique with Analysis Patterns — a classification system that enables both discovery (finding relevant existing analyses) and construction (building new analyses by reusing structures).

Discovery and Construction Working Together

Analysis Patterns unify two complementary strategies for answering business questions: Discovery finds. Construction builds. Using the same analytical vocabulary.

When the system encounters "Show me Year-to-Date Returns," it first searches for similar existing calculations using pattern-based discovery. If it finds "YTD Sales," it recognizes this is the same pattern with a different input parameter. Rather than starting from scratch, the system reuses the analytical structure — substituting the input to construct the new calculation.

The newly constructed calculation is automatically tagged with the same pattern, making it discoverable for future questions. This creates a virtuous cycle: Every construction enriches discovery. Every discovery accelerates construction.

Why Patterns Matter

Business-Centric Conversations. Communicate in terms of higher-level abstractions like "ranking customers by growth" rather than SQL window functions and subqueries. This enables high-value clarifying questions like "What behavior do you want to rank customers by?"

Objective Validation. By mapping ambiguous natural language into formal pattern features, we enable objective validation. If the question is "high-frequency customers with declining QoQ purchases," we can verify the interpretation contains the right patterns.

Institutional Knowledge. The pattern library becomes a catalog of how the organization thinks analytically. New analysts learn the analytical vocabulary by browsing patterns and examples. Over time, it captures not just generic patterns but enterprise-specific ones.

Interpreting Questions

Business questions are inherently ambiguous and complex. "Which stores are losing habitual buying customers?" requires understanding what "habitual" means, what "losing" means, which time period to consider, and how to measure the relationship between stores and customer behavior.

A better approach mirrors how expert analysts work: iteratively refine understanding through discovery, reasoning, and feedback.

The Interpretation Loop

The interpretation process combines four techniques that work together iteratively:

1. Language Understanding. Parse the question to identify key phrases and analytical intent. "Habitual buying" maps to a customer behavioral pattern. "Losing" maps to negative change over time. "Stores" maps to a grouping dimension.

2. Knowledge Graph Discovery. Search the graph using multiple strategies — semantic search (embedding similarity), pattern-based search (analytical structure), and structural search (navigating relationships).

3. LLM-Guided Reasoning. Use the LLM to fill gaps, adapt existing elements, and construct new ones. If "Frequent Buyers" exists but lacks a recency constraint, the LLM reasons: "Add recency filter to match 'habitual' behavior."

4. Iterative Refinement. Multiple feedback loops ensure correctness. The system asks: "What defines 'habitual'? Frequency-based (3+ purchases/month) or value-based ($500+ per quarter)?" Unlike text-to-SQL's black-box generation, this approach makes reasoning explicit and refinable.

The Multi-Phase Workflow

The interpretation unfolds through distinct phases with user validation at key points:

- Interpretation — Parse, search, construct iteratively with continuous feedback

- Validation — Check completeness, pattern conformance, data availability

- User Approval — Present interpretation in business terms: "I interpreted 'habitual buyers' as customers spending $500+ per quarter, and 'losing' as quarter-over-quarter decline. Is this correct?"

- Execution & Learning — Translate to executable queries, execute, and persist validated elements to the Knowledge Graph for future reuse

Every validated interpretation enriches the graph. New segmentations, calculations, and patterns become discoverable building blocks for future questions. The system gets smarter with every question.

Conclusion

This approach represents a fundamentally different way to interpret business questions — one that resolves the critical weaknesses of text-to-SQL agents while preserving self-service speed and flexibility.

| Capability | Model-Driven BI | SQL Notebooks | Text-to-SQL | Spotonix |

|---|---|---|---|---|

| Self-service | ✗ | ✓ | ✓ | ✓ |

| Fast turnaround | ✗ | ✓ | ✓ | ✓ |

| Semantically rich | ✓ | ✗ | ✗ | ✓ |

| Business-friendly | ✗ | ✓ | ✓ | ✓ |

| Explainable | ✓ | ✓ | ✗ | ✓ |

| Handles complexity | ✓ | ✓ | ✗ | ✓ |

| Composable reuse | ✓ | ✗ | ✗ | ✓ |

The system delivers: fast self-service turnaround, arbitrary complexity through composition, business-centric semantic precision, complete auditability, systematic reuse across analyses, and continuous learning from each question.

Institutionalize your knowledge, not your people.